The New Age of Machine Vision: Understanding, Not Just Seeing

Contemporary machine vision achieves far more than basic identification. Current platforms interpret intricate scenes, grasp context, and extract meaning. For example, in automated quality assurance, AI now classifies flaw types, predicts their origins, and initiates fixes on the assembly line. This evolution from passive observation to active comprehension is driven by convolutional neural networks and vision transformers. These models process spatial relationships in visual data with exceptional accuracy. Therefore, they set a new benchmark for precision in industrial inspection.

Powering Perception: The Deep Learning Advantage

Exponential growth in data and advanced architectures fuels this capability leap. Models such as Vision Transformers have broken performance records. They use self-attention on image sections to understand connections across a picture. Moreover, foundational vision models are a game-changer. These large systems, trained on immense and varied image sets, adapt quickly to specific jobs with little extra training. As a result, one model can manage retail stock tracking, medical image review, and crop health monitoring. This demonstrates remarkable versatility for industrial automation.

Agentic AI: The Shift from Analysis to Independent Action

A defining trend for 2025 is the rise of “agentic AI.” These systems perceive, think, plan, and act independently. By merging vision-language models with reinforcement learning, AI can now make a series of decisions in changing settings. Consider a self-directed mobile robot in a distribution center. It uses live visual data to navigate, recognizes items for picking, and adjusts its path around obstacles—all without human input. This represents a fundamental change from supportive intelligence to full operational autonomy in control systems.

Transforming Healthcare with Precision Vision

In healthcare, AI augments specialists like radiologists. Deep learning algorithms spot minute patterns in X-rays and slides that humans might miss. For instance, systems can measure tumor changes across scans with sub-millimeter accuracy or identify early signs of eye disease. The key development is multimodal diagnosis. Here, AI combines imaging data with genetic profiles and patient records to recommend personalized treatment plans. This significantly improves forecast precision and patient outcomes.

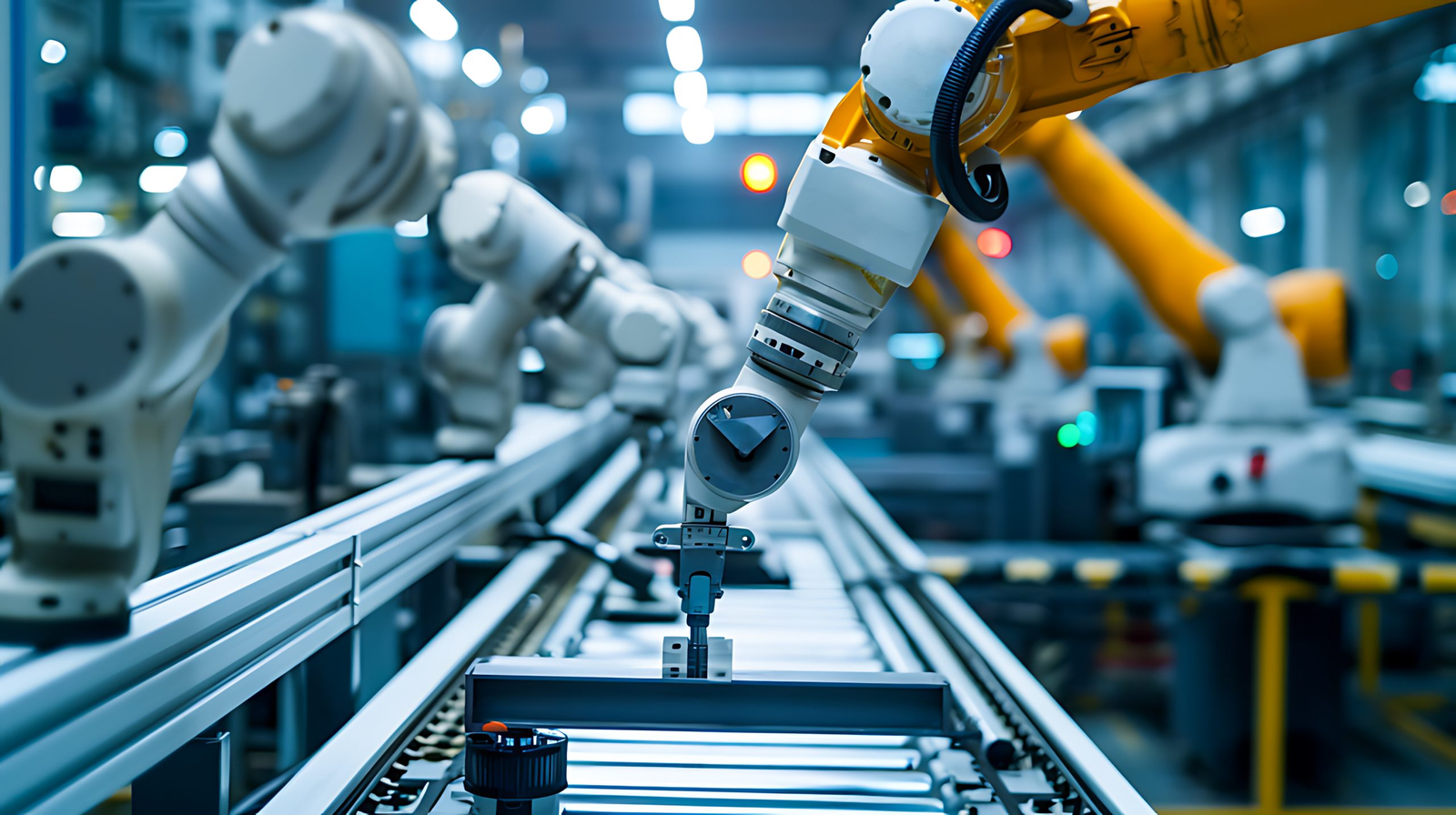

Building the Autonomous Factory Floor

The Industry 4.0 vision is now a reality. Vision-guided robots handle complex assembly, while AI predicts machine failure by analyzing visual and thermal feeds, sometimes weeks in advance. Furthermore, computer vision enhances worker safety by monitoring for protocol breaches in real-time. The widespread adoption of 5G technology is crucial. It allows fast transmission of HD video to edge computing devices. This enables immediate analysis and control across extensive factory campuses, a core goal of modern factory automation.

Smart Mobility and Infrastructure Powered by AI

Self-driving vehicles remain the most complex test for integrated AI perception. Modern systems combine camera, LiDAR, and radar data to build a comprehensive model of their environment. The 2025 advance is “scene understanding.” The AI predicts a cyclist’s likely path and intent, not just detects one. Similarly, smart cities use fixed vision networks to improve traffic, manage crowds, and bolster security through behavioral analytics. However, this must balance efficiency with strict privacy standards, a challenge for public trust.

Navigating Critical Challenges and Ethics

This progress introduces serious challenges. Bias in training data persists as a major issue. A model trained on non-diverse faces will not perform fairly. Therefore, the industry is adopting synthetic data and algorithmic audits to address this. Additionally, the immense energy needed to train large models clashes with sustainability aims. This spurs research into efficient architectures like spiking neural networks. Finally, granting autonomy to AI raises vital questions about accountability and safety. Human oversight remains essential for high-stakes decisions in plant control systems.

Future Outlook: Anticipatory Systems and Convergence

The next frontier is predictive AI. Research focuses on models that can forecast future states, like machine wear or traffic flow, from current visual data. Moreover, the merger of AI with Extended Reality will create intelligent, visual interfaces for design and remote work. Success will require more than technical innovation. It demands strong governance to ensure these powerful vision and decision tools are used safely, ethically, and for society’s benefit. In my analysis, the firms that lead in establishing these trustworthy frameworks will gain a significant long-term advantage.

Practical Application Scenarios

Case 1: Automotive Manufacturing: A vision system on a robotic welder not only verifies weld placement but also analyzes the weld pool’s thermal signature in real-time. It then adjusts parameters to prevent defects, showcasing closed-loop control in automation.

Case 2: Pharmaceutical Packaging: An AI vision agent monitors blister packs on a high-speed line. It reads batch codes, checks pill count and orientation, and diverts any faulty packs. This integrates quality control directly into the production sequence, reducing waste.

Frequently Asked Questions (FAQs)

Q1: How does modern AI vision differ from traditional systems?

Traditional computer vision used fixed, rule-based programming. Modern AI vision employs deep learning to develop a flexible understanding from examples, allowing it to interpret context and link perception to action in dynamic industrial environments.

Q2: What role does 5G play in industrial AI vision?

5G provides the high bandwidth and ultra-low latency needed to stream high-definition video from many cameras to central or edge processors. This is essential for real-time analysis and instantaneous response in factory automation and control systems.

Q3: What are Vision-Language Models used for in industry?

VLMs allow workers to interact with systems using natural language. For example, a technician could ask a maintenance system, “Show me the component that overheated yesterday,” and the AI could retrieve the relevant thermal video footage and highlight the part.

Q4: How can bias in AI vision models be reduced?

Mitigation strategies include using diverse, representative training datasets, generating synthetic data to fill gaps, and implementing continuous algorithmic fairness audits throughout the model’s development and deployment lifecycle.

Q5: What skills are vital for implementing AI vision solutions?

Successful implementation requires a cross-functional team with skills in machine learning, domain-specific knowledge (e.g., process engineering), data pipeline management, and a strong understanding of operational technology and cybersecurity for integration.

No products in the cart.

No products in the cart.