XELA Robotics Unveils Advanced Touch Sensing for Next-Generation Automation

Breakthrough Tactile Technology Debuts at CES 2026

XELA Robotics has launched its uSkin 3D tactile sensing platform at CES 2026. This innovation provides humanoid and industrial robots with continuous, human-like touch feedback. Consequently, it addresses a major historical gap in robotic manipulation and real-world automation. The technology promises to enhance how robots interact with physical objects in dynamic environments.

How uSkin Mimics Human Touch for Robots

The uSkin system integrates layered, elastomer-based sensors directly into robot grippers and hands. These sensors measure contact forces, object shape, and subtle movements across the entire grasping surface. Therefore, robots gain a detailed, real-time understanding of their grip. This capability is crucial for handling delicate or irregular items without causing damage.

Author’s Insight: This move from binary grip detection to nuanced 3D force mapping represents a fundamental shift. It enables a new level of physical intelligence, or “Physical AI,” where machines can adapt their grip in real-time, much like a human hand adjusts to a slipping glass.

Designed for Practical Integration, Not Just Research

XELA emphasizes a practical, agnostic approach to commercialization. The sensors are compact, durable, and engineered for straightforward integration into existing systems. This significantly reduces the development burden for OEMs and integrators. The technology already interfaces with hardware from leading brands like Robotiq, Weiss Robotics, and Wonik Robotics.

Target Industries and Immediate Applications

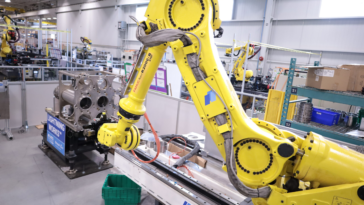

Initial deployments focus on sectors where dexterity and reliability are paramount. In manufacturing, uSkin can guide robots in assembling fragile components. For logistics and warehousing, it enables safe handling of diverse, unstructured items. Agricultural robots can use it to harvest produce without bruising. These applications directly reduce operational errors and downtime in factory automation and beyond.

Driving the Future of Dexterous Manipulation

The integration with the Tesollo DG-5F anthropomorphic hand highlights the trend toward human-like dexterity. As CEO Alexander Schmitz stated, the goal is to make advanced touch available for broad automation enhancement. This technology is a key enabler for the next wave of collaborative and autonomous robots in industrial settings.

Solution Scenario: Electronics Assembly Line

Consider a circuit board assembly station. A robot equipped with uSkin-equipped grippers picks up a delicate microcontroller. The tactile sensors detect minute force changes as the chip aligns with its socket. The control system uses this feedback to make micro-corrections, ensuring perfect, damage-free placement every time. This solves a major pain point in high-precision manufacturing.

FAQ: XELA uSkin Tactile Sensing Technology

Q1: How does uSkin differ from traditional force/torque sensors?

A: Traditional sensors often measure total force at a single point (the wrist). uSkin provides a dense, 3D map of forces across the entire contact surface (fingertips, phalanges, palm), offering much richer data for manipulation.

Q2: Is this technology only for humanoid robot hands?

A: No. XELA’s agnostic approach means uSkin can be integrated into various end-effectors, including standard two-finger industrial grippers, making it applicable across a wide range of industrial automation tasks.

Q3: What is “Physical AI” in this context?

A: It refers to the robot’s ability to intelligently interact with the physical world using sensory feedback (like touch) to make real-time decisions about manipulation, such as adjusting grip force or re-grasping an object.

Q4: What are the main industries that will benefit first?

A: Manufacturing (especially electronics and pharmaceuticals), logistics (piece-picking), and agriculture are primary targets where handling fragility and variability are critical challenges.

Q5: Is the system ready for commercial deployment?

A: Yes. XELA reports the sensors are already in use in academic and commercial pilot projects. Integration with partners like Robotiq and Tesollo indicates a clear path to broader commercial availability in 2026.

No products in the cart.

No products in the cart.